An XML sitemap is a file that lists all the important pages of your website. It helps search engines like Google, Bing, and Yahoo discover and index your content more efficiently. While search engines can discover pages through links, a sitemap ensures that all your important pages are found, especially for new websites or sites with complex structures. This guide explains what sitemaps are, why they matter for SEO, and how to generate one for your website for free.

What Is an XML Sitemap?

An XML sitemap is a file written in XML format that lists URLs for a site along with additional metadata about each URL. This metadata includes information like when the page was last updated, how often it changes, and how important it is relative to other pages on the site. Search engines use this information to crawl your site more intelligently.

The sitemap protocol was introduced by Google in 2005 and has since been adopted by other major search engines. The protocol defines a standard XML format that all search engines understand, making it a universal way to communicate your site's structure to search engines.

Sitemaps are particularly useful for websites that are new, have few external links, use rich media content, or have large archives of content that aren't well-linked. These sites might not be discovered completely through standard crawling, so a sitemap ensures that all important pages are found.

Why Sitemaps Matter for SEO

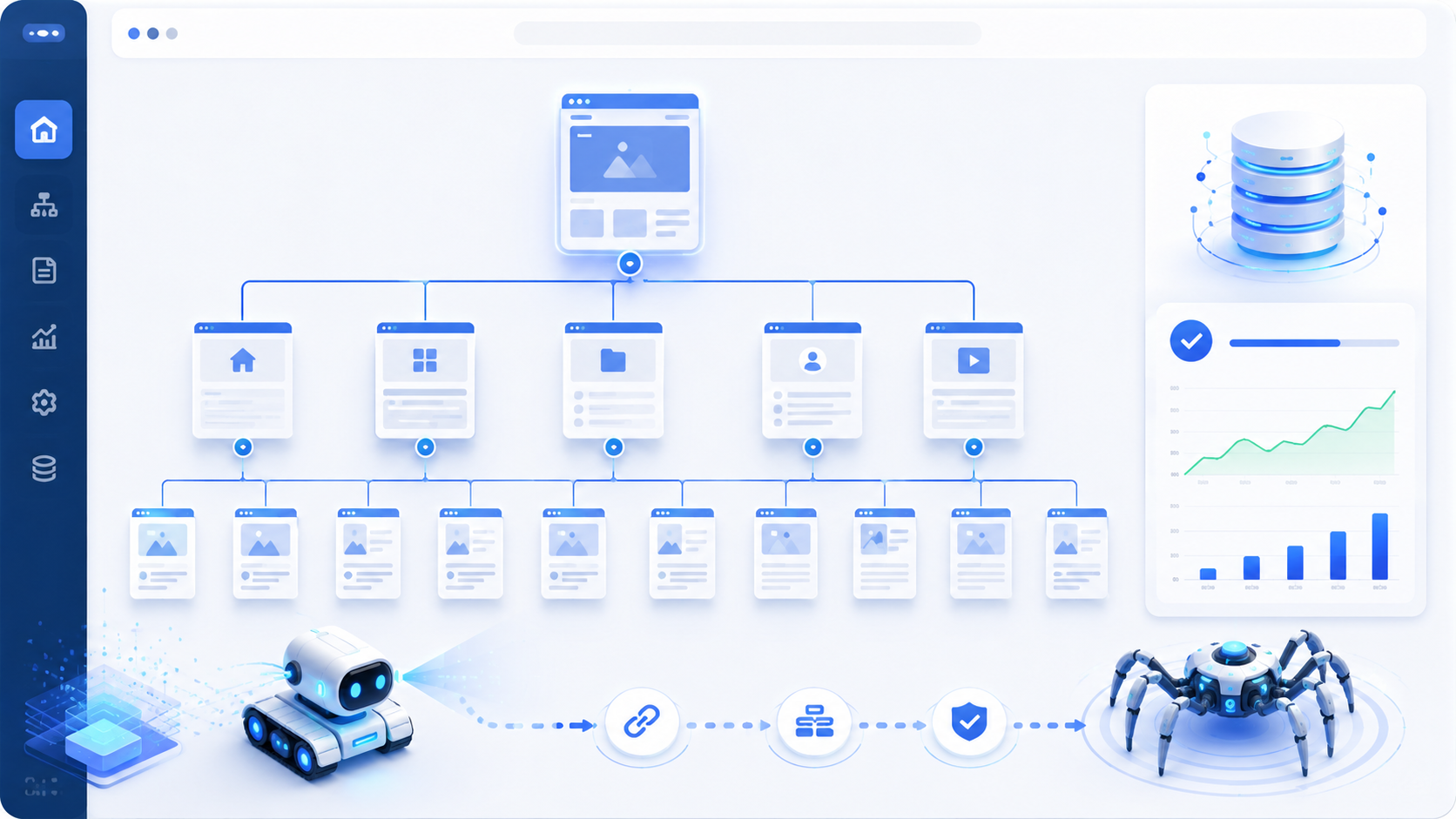

Sitemaps provide a roadmap of your website to search engines. Without a sitemap, search engines rely on following internal links to discover pages. If some pages are deeply nested or have few internal links pointing to them, they might never be discovered. A sitemap explicitly tells search engines about these pages, improving your site's coverage in search results.

For new websites, sitemaps are especially important. New sites typically have few external links, so search engines have limited ways to discover them. Submitting a sitemap helps search engines find all your pages quickly, which can accelerate the indexing process and help your site appear in search results faster.

Sitemaps can also include metadata that helps search engines crawl your site more efficiently. The last modified date tells search engines whether they need to recrawl a page. The change frequency provides guidance on how often to recrawl. This information helps search engines prioritize which pages to crawl and when, making more efficient use of their crawl budget.

Sitemap Elements Explained

The <url> element contains information about a specific URL on your site. Each URL you want included in your sitemap gets its own <url> block with the associated metadata.

The <loc> element specifies the URL of the page. This must be a complete URL including the protocol (http:// or https://). URLs should be encoded properly and use lowercase for consistency. The URL is the most important element—it's the actual link to the page you want indexed.

The <lastmod> element indicates when the page was last modified. This helps search engines understand if they need to recrawl the page. Use the date in YYYY-MM-DD format for compatibility with all search engines. Accurate last modified dates help search engines prioritize crawling recently updated content.

The <changefreq> element suggests how frequently the page is likely to change. Values include always, hourly, daily, weekly, monthly, yearly, and never. This is a hint to search engines about how often to recrawl the page, though they may crawl more or less frequently based on their own algorithms.

The <priority> element indicates the importance of this page relative to other pages on your site. Valid values range from 0.0 to 1.0, with 1.0 being most important. This does not affect your site's ranking in search results but helps search engines prioritize which pages to crawl first.

Types of Sitemaps

The most common type is the standard XML sitemap that lists web pages. However, there are specialized sitemaps for different types of content. Image sitemaps list image URLs and provide information about image location, caption, title, and license. This helps search engines discover images that might be embedded in JavaScript or loaded dynamically.

Video sitemaps provide information about video content on your site, including title, description, duration, and thumbnail. This helps search engines index your videos and display them in video search results. Video sitemaps are particularly useful for sites with significant video content.

News sitemaps are specifically for news sites and help Google News discover and index news articles quickly. These sitemaps include publication date and title information that helps Google News determine the relevance and timeliness of articles.

For very large sites, you can use a sitemap index file that links to multiple sitemap files. A single sitemap can contain up to 50,000 URLs and must be no larger than 50MB uncompressed. If your site exceeds these limits, you need to split your sitemap into multiple files and use a sitemap index to link them together.

How to Create a Sitemap

You can create a sitemap manually by writing the XML code according to the sitemap protocol. This approach gives you complete control over the file but requires technical knowledge and careful attention to formatting. Manual creation is practical for small sites with few pages but becomes impractical as your site grows.

Content management systems like WordPress, Shopify, and Squarespace typically include built-in sitemap generation or have plugins that automatically create and maintain sitemaps. If you're using a CMS, check whether it already generates a sitemap before creating one manually.

Our sitemap generator tool allows you to create XML sitemaps manually by adding URLs one at a time. This is useful for sites that don't have automated sitemap generation or for situations where you need to create a custom sitemap for specific purposes.

Sitemap Best Practices

Only include canonical URLs in your sitemap. Don't include duplicate content, redirected pages, or pages blocked by robots.txt. Focus on pages that provide value to users and that you want appearing in search results. Including low-quality or duplicate pages can dilute your crawl budget and might confuse search engines about which pages are most important.

Keep your sitemap updated whenever you add or remove important pages. For dynamic sites, consider generating sitemaps automatically. Large sites should split sitemaps into multiple files with a sitemap index file, but our tool is designed for smaller sites with under 50,000 URLs.

Submit your sitemap to search engines through their webmaster tools. Google Search Console, Bing Webmaster Tools, and other platforms allow you to submit sitemaps directly, which can speed up the discovery and indexing process. Regular submission ensures search engines are aware of your latest content.

Where to Place Your Sitemap

The sitemap.xml file should be placed in the root directory of your website, making it accessible at yourdomain.com/sitemap.xml. This is the standard location that search engines expect and automatically check. Placing it in any other location will prevent crawlers from finding it without explicit submission.

You can specify the location of your sitemap in your robots.txt file using the Sitemap directive. This helps search engines discover your sitemap even if you haven't submitted it through webmaster tools. The format is: Sitemap: https://yourdomain.com/sitemap.xml

Testing Your Sitemap

Before deploying your sitemap, validate it using online sitemap validators or search engine tools. Google Search Console includes a sitemap testing feature that shows you how Google interprets your sitemap and identifies any errors or warnings.

Common errors include invalid XML formatting, incorrect URLs, URLs that return errors, or URLs that are blocked by robots.txt. Address these issues before deploying your sitemap to ensure search engines can process it correctly.

After deployment, monitor your sitemap in Search Console to see which URLs are indexed and which might have issues. Search Console provides detailed reports on sitemap coverage, indexing status, and crawl errors.

Sitemap vs. robots.txt

Sitemaps and robots.txt serve different but complementary purposes. Robots.txt controls crawler access by telling search engines which parts of your site they can and cannot crawl. Sitemaps provide a list of important pages you want search engines to discover and index.

Use robots.txt to block crawlers from unnecessary or sensitive areas. Use sitemaps to highlight important pages that should be crawled. The two work together to guide search engine crawling behavior—robots.txt excludes unwanted crawling, while sitemaps ensure important content is discovered.

Be careful not to contradict these files. Don't include pages in your sitemap that are blocked by robots.txt—this sends mixed signals to search engines. Ensure your robots.txt and sitemap are aligned in their guidance.

When Sitemaps Are Most Important

New websites benefit most from sitemaps because they have few external links and are not yet well-established in search engines. Submitting a sitemap helps search engines discover all pages quickly, which can accelerate the indexing process.

Websites with rich media content like videos and images benefit from specialized sitemaps that help search engines discover this content. Media files might not be discovered through standard crawling, especially if they're loaded dynamically or embedded in JavaScript.

Large sites with thousands or millions of pages need sitemaps to ensure complete coverage. Without sitemaps, search engines might miss important pages, especially those deep in the site structure or with few internal links pointing to them.

Sites with archived content that isn't well-linked can use sitemaps to ensure older content remains discoverable. This is particularly important for news sites, blogs, and content libraries with extensive archives.

Conclusion

XML sitemaps are a fundamental component of technical SEO that help search engines discover and index your website's content efficiently. Whether your site is new or established, large or small, implementing a sitemap ensures that all your important pages are found by search engines. Create your sitemap using our free generator tool, place it in your root directory, submit it to search engine webmaster tools, and keep it updated as your site evolves. With proper sitemap implementation, you can improve your site's coverage in search results and ensure your valuable content gets the visibility it deserves.